Digital Initiatives and AD&S have been actively working hard on the discovery project as we work towards January 1st, 2019 as the target date to take the software out of beta. We are excited about the progress being made and the plan that will take us into the new year. With this most recent update, you’ll see some exciting new concepts as well as the integration of a good deal of backend development designed to make the tool faster, easier to manage, and more consistent.

But first, Terry would like to thank some people for their continued hard work on the project…

Stephen, our lead developer, has taken it upon himself to learn a variety of new skills and tools to really push the direction of this project. Early on, we challenged him to find a better technical model that what was currently available – something that would be easy for us to manage and potentially easy for us to share. And he’s delivered…incorporating modern web development techniques to develop a light-weight tool made for today’s internet and devices.

We’d also like to highlight the UX partnership between AD&S and Digital Initiatives. When we started the discovery project, we wanted to model a different type of development – one that put users at the center. And this has at times been a challenge for the Libraries. As we all know, it can be difficult to get a good representative sample when working with such a large population – so we had to look for partners. Early this summer, we proactively reached out to the OSU student government, and they have been working in partnership with the Libraries to identify and provide students for testing. It has been a fantastic partnership, and one that is giving us a much larger community to draw feedback.

Michelle has been regularly interviewing and working with our user community to understand some of the pain points in the new discovery tool. In general, the feedback has fallen into a handful of specific categories:

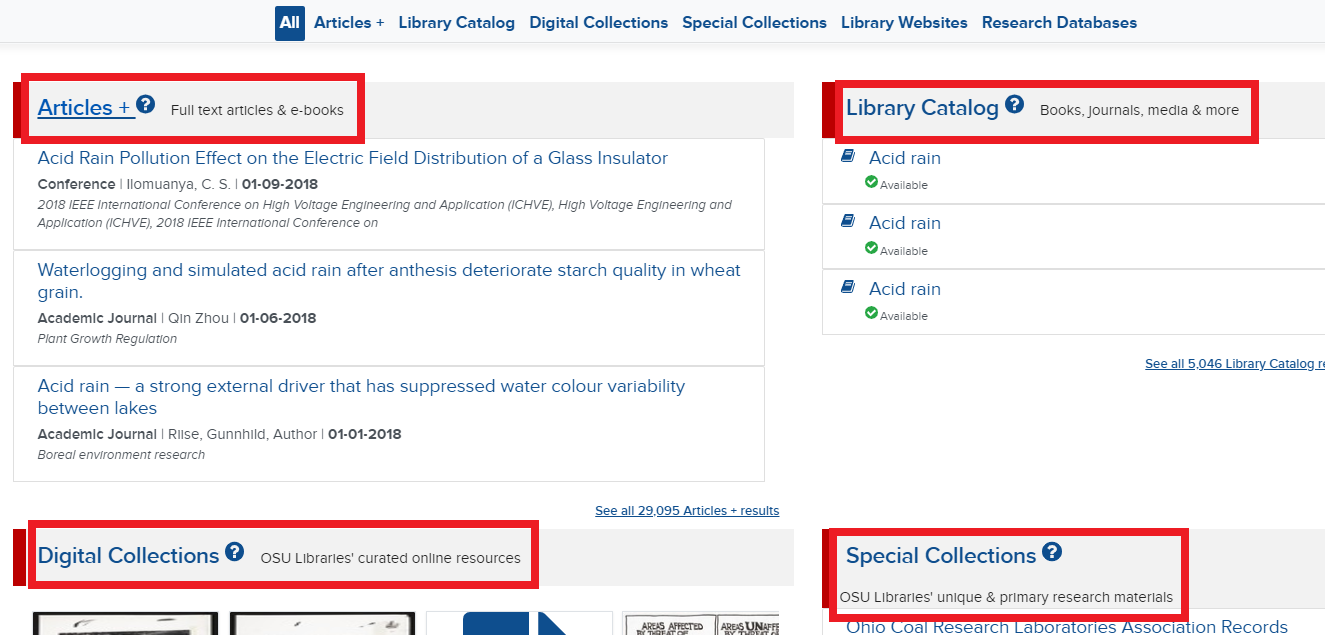

- Confusion around the interface – while we have tried to reduce the reliance on library jargon (like facet), the many bentos, the scope of content…these are proving to be barriers. The many bentos are giving a lot of context, but ultimately, one of the challenges for students is a lack of understanding as to how we organize collections in the Libraries.

- Inconsistent links – we’ve been having some troubles with some interactions with the proxy and content returned via EDS. This hasn’t been completely resolved, but it should be soon. However, this has lead us to over compensate on the interface by putting links everywhere. Students are asking that when we link something (like an article title), that the results are consistent and reliable. To that end, we have reduced some unnecessary data and linking options.

- Simplifying Workflows – the process of getting to help or items is still taking too many clicks. We need to find ways to continue reducing the number of decisions made to get to valuable content.

Discovery Refresh

With this in mind, we spent a lot of time working on the back end to fix reliability and refresh issues, to address speed and performance concerns, and to take a hard look at how we present content to the Libraries. So, with this refresh, you will notice a few new things. First, there is a new “view”. While the default view continues to be bentos broken out by category, we have introduced a more integrated list view as a new option for Discovery.

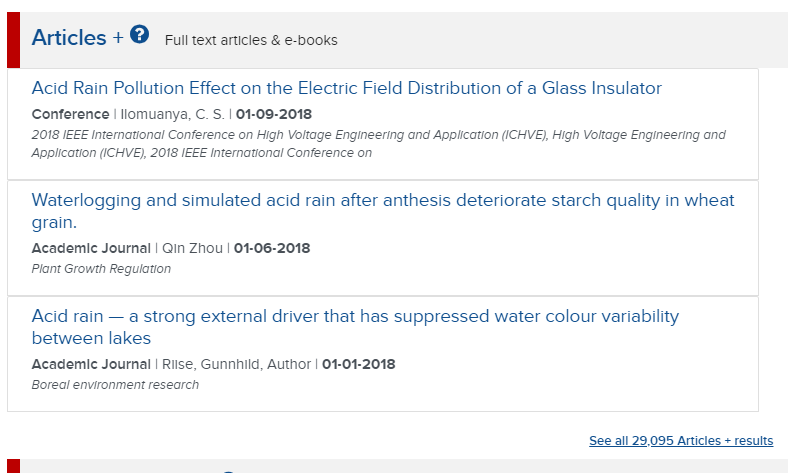

This view has just the Articles+ and the All Library Content. Users can toggle to this view by clicking the list icon in the upper left of the application, and allows us to put all the content in the Libraries in context against the user’s search. This option is provided specifically to address user feedback – to simplify finding content in the Libraries. For users who still want to focus on a particular type of content, the focus views continue to be available. We’ll be performing usability testing this new interface and making a decision around the default view based on extensive feedback.

In addition to the new user interface option, we’ve implemented an updated indexing core and session management. This will have two important impacts.

- It will allow us to develop more granular indexing rules around content types – enabling better discovery. In our previous model, books shared the same indexing rules as EAD files. In the new model, these can be different. That’s enabling us to really push our indexing tooling.

- In the current discovery tool, sometimes pages would hang. This was sometimes related to how events (actions) occurred within different browsers. To fix this, Stephen has implemented a shared session manager that will create a more reliable and faster user experience.

Going Forward

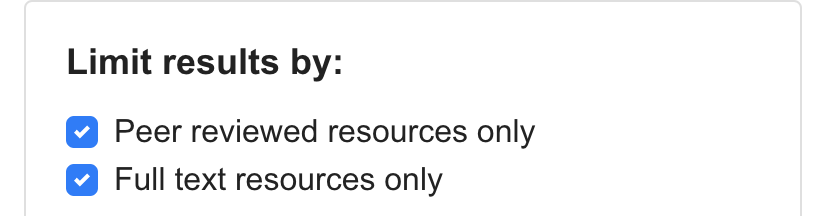

With these changes in place, AD&S and Digital Initiatives will be shifting Discovery development from feature development to quality development. This means that over the next month, we will be specifically addressing user feedback – targeting pain-points and simplifying workflows. This will include some new limits in Articles+ like limiting results to OSU owned content and peer reviewed materials, to a more straightforward process for users looking to find e-books or pass a search to OhioLINK.

Finally, if you are interested in providing feedback, Michelle is actively looking for feedback from the Libraries. In addition to working with students, Michelle has done a number of usability sessions with faculty and staff in the Libraries. If you want to be a part of that process, or know a student who might be interested, please let her know.